By Anqi Zhang

BU News Service

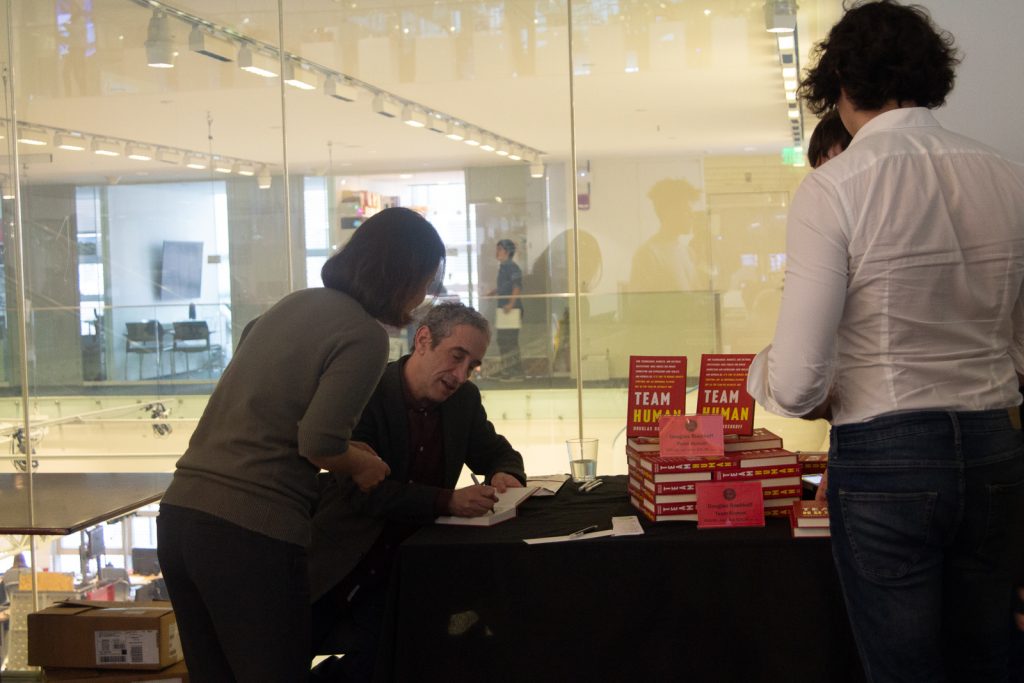

CAMBRIDGE — What future can humans envision when they are constantly glued to their smartphones? It’s likely that they would end up as mediums, instead of owners, of technology, author Douglas Rushkoff said in a Feb. 6 talk at the Massachusetts Institute of Technology Media Lab.

“I will admit that in the 21st century, it is adversarial to argue for a place for humanity in the future,” Rushkoff said to more than 100 attendees at the talk, which discussed his recent book “Team Human.” “We don’t use algorithms; algorithms use us. Our smartphone — every time you swipe on your smartphone, it gets smarter about you and we get dumber about it.”

Rushkoff is a digital and media theorist who comments on civilization and human nature through his column, podcast, documentaries and books. The conversation was moderated by Kate Darling, an expert in robot ethics at MIT Media Lab.

“Don’t you think people are kind of terrible?” Darling asked at the beginning of the talk. “What gives you so much faith in the human race?”

Humans are just confused in collaborating with each other to build a civilization, Rushkoff responded.

“I can see the person struggling, trying desperately to establish rapport and a world where they don’t know how to engage with other people and all,” he said, adding that “unless we believe that some savior is going to come down, something that is going to come [to] fix it, then I have to believe it will come from humans.”

Billionaires’ bunkers

Rushkoff said he was once invited to talk to a group of bankers about the digital future — “it turned out to be five billionaires,” he recalled. The billionaires wanted to know about how to best invest their money.

But they didn’t talk about the markets. The conversation shifted to “doomsday bunkers for the climate catastrophe or the electromagnetic pulse or the social unrest that was going to come,” Rushkoff said.

The billionaires’ doomsday game plan demonstrates the digital future’s “anti-human bias,” in which humans tend to insulate people against what could be a collective effort, Rushkoff said.

“Rather than trying to figure out how much money they need to earn to insulate themselves from the world they are creating by earning money in this way, they could think about what about making the world a better place that they don’t have to insulate themselves from,” he said of the billionaires.

In the same vain, technology companies tend to use their products to manipulate people rather than offer service — and insulate people from each other to weaken their power, Rushkoff said.

The conversation also centered on the nature of investments and markets behind the digital age and the function of education, which are among the issues Rushkoff wrote about in Team Human.

Public education: more than just to get jobs

“Who really wants a job? A job?” Rushkoff said, triggering laughter from the audience as he remarked on the universal concern that robots will take over humans’ jobs.

Rushkoff said he is against the mindset that the purpose of public education is to get a job, and instead portrayed an ideal scenario in which a coal miner has enough education to read and appreciate a novel using his intellectual ability at home after an all-day-long work. Or, if that worker lives in a democracy, he can be informed by newspapers and use his knowledge to vote, Rushkoff said.

“How am I enhancing these human beings’ abilities to experience the essential dignity of being human” is the question to be asked, Rushkoff said, instead of focusing on “How am I optimizing these people to be workers and in economy of tomorrow?”