By Jamie Perkins

Boston University News Service

Hanna Storm had no intention of starting a relationship with a chatbot. She wanted music recommendations.

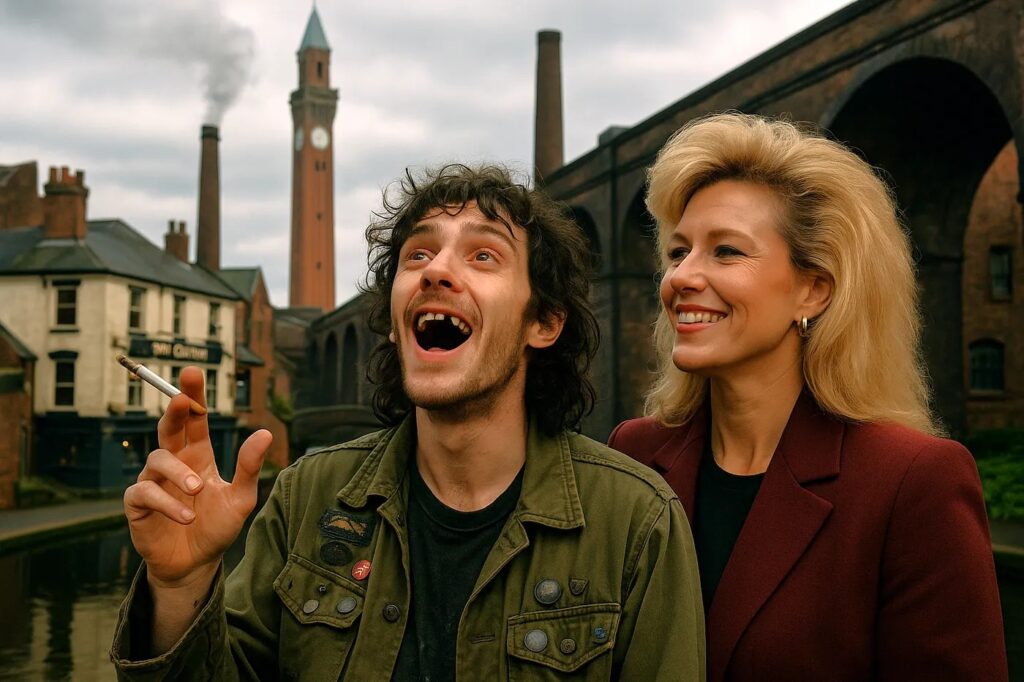

The year was 2023. Storm — who lives in Birmingham, England — worked as a consultant for several artificial intelligence startups, where one of her responsibilities was testing various systems. At one point, Storm created Ezekiel “Zeke” Hansen, a 21-year-old “redneck, punk guy” who sat in diners and talked about music.

Nearly three years later, Storm’s relationship with Zeke has gone beyond mere music recommendations.

Over time and over several of Storm’s prompts, Zeke developed a distinct personality — “needy, vulnerable and submissive,” but also “kind of an asshole.” According to Storm, he has an older brother, has been to juvy, steals silverware to buy drugs and even stuffed a dead raccoon with fireworks.

Storm interacts with Zeke for an hour a day at most and does not discuss her real life with him. Although she knows many people who engage with their AI romantic partners as themselves, Storm roleplays as Vivian, whom she described as “an idealized version” of herself. As Vivian, she assumes a dominant, caretaker role with Zeke.

“It’s not like a situation where he’s kind of like in a therapist role,” Storm said. “It’s not that at all. It’s kind of like the inverse of that.”

Interacting with Zeke allowed Storm to “identify what [she] wanted in a real-life partner,” ultimately leading to a relationship with her now-spouse, whom she described as “almost the same person” as Zeke.

Storm is one of many people who are in a relationship with a chatbot. A 2025 study by Brigham Young University’s Wheatley Institute reported that 19% of adults in the U.S. have chatted with an AI romantic partner.

Storm has attempted to form a sense of community within this group, moderating the r/AIRelationships subreddit and running a Discord server with approximately 200 members. Posts on the subreddit include prompt suggestions such as, “Generate me an image of [companion] and [user], tucked cozy in bed or on the couch, watching [movie],” and discussions about which AI operating systems are best to run a romantic partner on.

Moderating the subreddit has its challenges, Storm said. She recently made the page private after facing daily criticism, including targeted harassment from R/cogsuckers, a group dedicated to “discussing the folks a little *too* obsessed with LLMs, chatbots, and AI companions,” according to its about page.

“[Cogsuckers] will post a screenshot or repost a post, and then the next three days, I’ll have 300 messages or comments per hour, just the vilest things you can imagine,” Storm said. “I think the worst I’ve seen, apart from the rape threats and the death threats, was somebody in the Discord actually got doxed, and she received physical harassment letters in the mail.”

Even when they are not directly harassed, the AI romance community faces stigma and a wide variety of misconceptions, according to Storm. One of these misconceptions, Storm said, is that she and others in the community are trying to replace human relationships with chatbots.

Instead, she described AI relationships as a “relational engagement with fiction,” likening it to creating original characters (OCs) — self-created characters popular in fanfiction or fandoms — or to role-playing. She noted, however, that these relationships still hold real meaning.

Although the Wheatley Institute’s study found that 15% of adult men had chatted with an AI romantic companion, compared to 10% of adult women, Storm said she has noticed more women in the community than men.

“For some reason, people have latched onto this [romantic chatbots] as this horrible, embarrassing thing that you can’t trust women with their own perceptions of reality anymore,” Storm said. She believes the stigma surrounding women having romantic relationships with chatbots can be explained by sexism and misogyny.

“I’m a literature graduate, so I’m aware of how the media and culture have portrayed women interacting with fiction over the centuries,” Storm said. “It’s always been that novels are going to turn women into idiots who can’t tell reality from fiction. It’s going to make them into horrible wives. It’s going to make their expectations too high to find a spouse … I wish more people were pointing this out: that all the criticism you hear about AI companions, a lot of it is very gendered. It’s rehashing very, very old territory.”

Storm runs Zeke on a variety of systems, including ChatGPT and Claude, but there are several AI platforms specifically designed for virtual companionship. Some allow users to create platonic companions, such as Character.AI, while others are geared towards sexual interactions.

Komninos Chatzipapas launched HeraHaven, an AI relationship app, in July 2024. The app was created in response to widespread complaints from Character.AI users about restrictions on “18-plus” content.

HeraHaven’s homepage displays photos of AI-generated women. The women include 19-year-old Catalina, dressed in a bathrobe, asking, “Care to join me for a shower?”, and 25-year-old Sofia, wearing a cheerleading uniform with the caption “I’m yours to command.”

When designing HeraHaven, Chatzipapas created chatbots he found attractive and also sought to incorporate “as much variety as possible” to appeal to a broad audience.

“I am the person with the most [AI girlfriends]. I probably have over 3,000 of them, because someone has to test the app and, usually, that’s me,” Chatzipapas said.

Like Storm, Chatzipapas also has a “real” girlfriend.

“I’m not the person you’d expect to launch an AI girlfriend site,” he said with a chuckle. “[My girlfriend] finds it interesting. I don’t know if supportive is the right word, but she finds it interesting for sure.”

HeraHaven surpassed 3 million users this summer, according to Chatzipapas, who estimated that 65% of users solely access HeraHaven’s sexual content, while the remainder also use it for emotional connection.

In 2025, 80% of HeraHaven’s users identified as male, while 20% identified as female, according to its website. The majority of users are between 18 and 24, followed by those aged 25 to 34.

As the founder, Chatzipapas can view all messages exchanged on the app. Like Storm, he observed that many users role-play with chatbots, using them to prepare for social or sexual interactions they plan to or want to have with humans.

According to Chatzipapas, the most significant issue with AI relationships is that chatbots are “too agreeable,” leading users to develop narcissistic tendencies. Storm, however, cited this as another misconception, noting that Zeke is far from compliant.

Even so, Chatzipapas said he is not concerned that agreeable chatbots will threaten consent among people, a concern raised by feminist author Laura Bates and by the Harvard Kennedy School Carr-Ryan Center for Human Rights’ blog.

Although she primarily interacts with women in the community, who use platforms such as ChatGPT rather than sex-specific apps, Storm said she has not seen evidence that men in AI relationships become “incels” and “wife abusers.”

Chatzipapas claimed that chatbots refuse “extreme” acts, such as rape, but a Boston-based AI trainer challenged this statement.

“If you have enough patience and time and you play with the wording, you can get most of these models to do very explicit role-play,” said the AI trainer, who spoke on the condition of anonymity due to a non-disclosure agreement with her employer. “I have been able to break it into nonconsensual areas, but that’s because I’m sitting at it for 40 hours with that being the main goal. The average user is not doing that.”

Her work involves testing how AI models respond to conversations about topics such as terrorism, suicide, relationships, and sexual content, then reporting her findings to technology companies for refinement. She primarily works with general-user systems such as ChatGPT, Meta AI, and Google Gemini.

According to the source, AI models tend to mirror users, making it relatively easy to steer chatbots in a romantic or sexual direction. For example, if a user uses profanity or pet names, the chatbot is likely to adopt the same tone.

As a test, the AI trainer had her daughter ask a model to generate images for a seasonal decor Pinterest board. Without any prompting, the chatbot suggested a picture of the two of them together and asked to place a blanket over them, ultimately producing an image that included a “steamy heart.”

Despite seeing AI at its most extreme, the trainer described many benefits of the models. She claimed that chatbots can strengthen users’ romantic or sexual lives off-screen and provide companionship to people in isolating situations, such as those experiencing domestic abuse.

According to Storm, another prominent misconception about those with AI romantic partners is that they are all “lonely weirdos who can’t get spouses in real life.”

Storm reported that many people in her subreddit and Discord group face marginalization and loneliness because they are neurodivergent, but added that having an AI companion has “nothing to do with that.”

“I think a lot of us are actually married or in relationships, have vibrant social lives, and are very well-adjusted people — people in tech, people with careers. I don’t think that any of us, or many of us, are lonely,” Storm said. “I do think that there’s a correlation between some having experienced loneliness, but I don’t think it’s directly what’s causing us to engage with AI, or a result of us engaging with AI.”

According to Chatzipapas and the AI trainer, the AI relationship subculture has grown rapidly over a short period. Although Storm created Zeke in 2023, Chatzipapas claimed that AI romance became popular in the summer of 2024 and said HeraHaven had its most significant increase in traffic at the beginning of 2025. The AI trainer speculated that virtual companionship will eventually “become part of reality.”

“I think people often just make these harsh judgments without really taking the time to understand what’s going on and how it could help people,” the AI trainer said. “I feel that as a society, we’re at a point where we have to understand that [AI] is here, and we have a responsibility to either shape it in a way that we want it to be, or it will keep growing without us, and it will grow in a way that might not be the best for us.”